|

We also show that FastMap is able to saturate state-of-the-art fast storage devices when used by a large number of cores, where Linux mmap fails to scale. A memory-mapped file I/O approach sacrifices memory usage for speed, which is classically called the spacetime tradeoff.

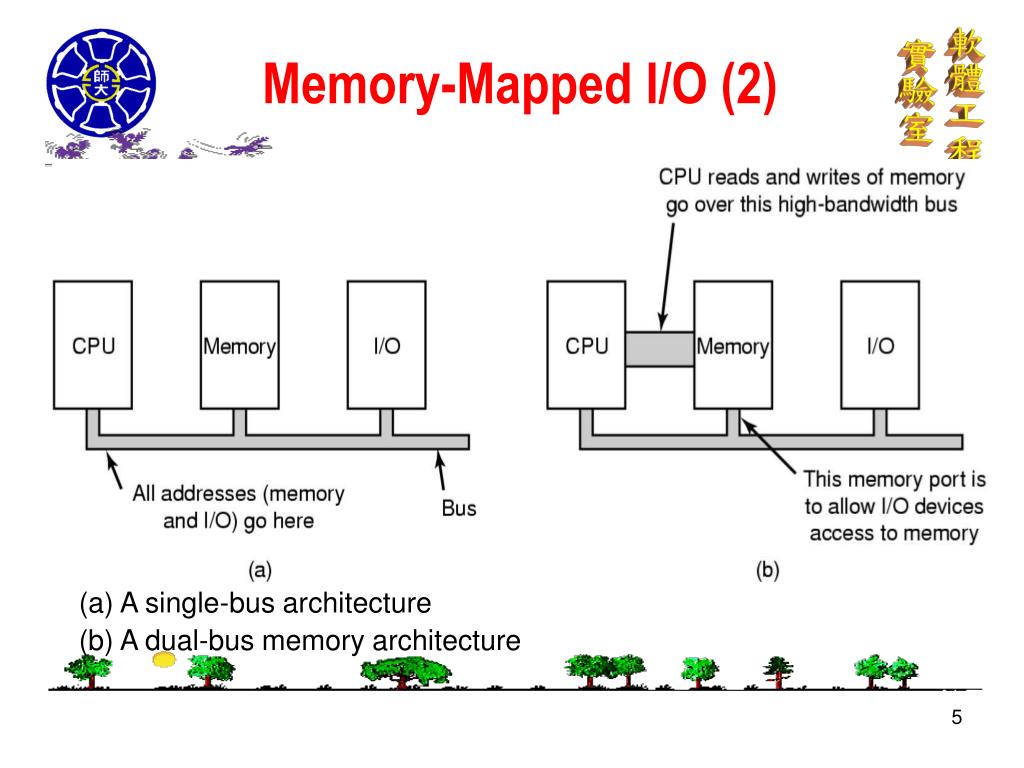

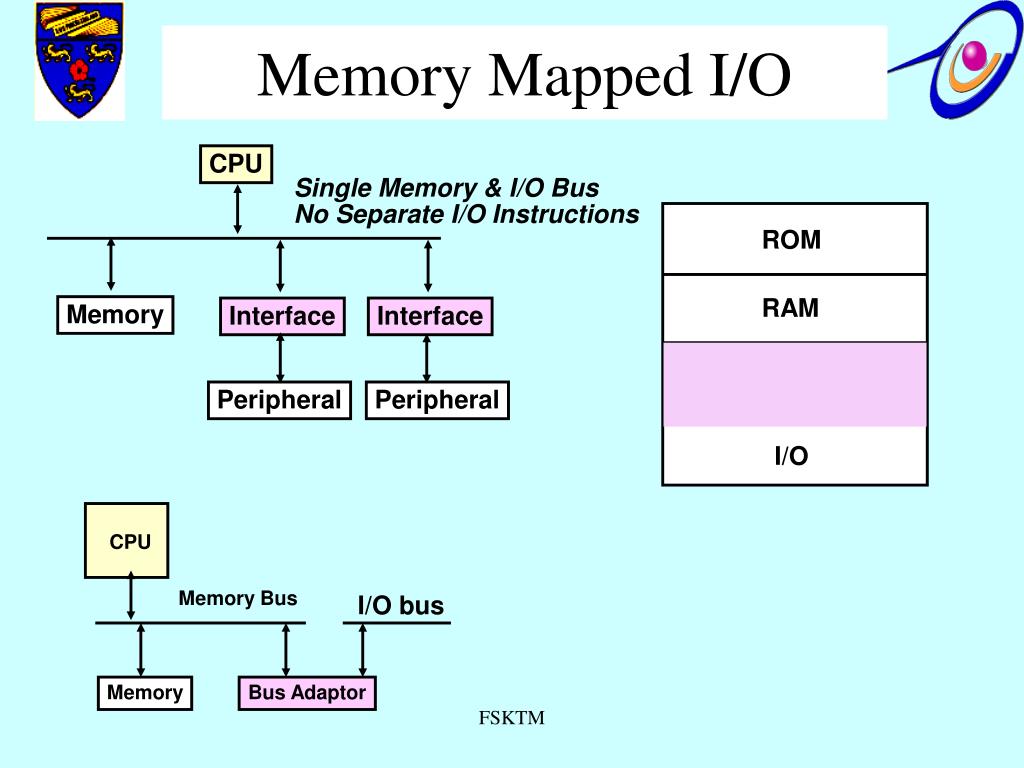

Additionally, it provides up to 5.27× higher throughput using an Optane SSD. Our experimental analysis shows that FastMap scales up to 80 cores and provides up to 11.8× more IOPS compared to mmap using null_blk. The only exception to this are the off-core devices (e.g. FastMap also increases device queue depth, an important factor to achieve peak device throughput. To overcome these limitations, we propose FastMap, an alternative design for the memory-mapped I/O path in Linux that provides scalable access to fast storage devices in multi-core servers, by reducing synchronization overhead in the common path. Feel free to transform it to your own domain and play around. Reading for another time I will take a Bioinformatics related task as the running example. In this article, I will only talk about Memory Mapped Writing. We show that the performance of Linux memory-mapped I/O does not scale beyond 8 threads on a 32-core server. But our programs will never see this change, but rather face occasional delays in memory mapped IO. And most times, the difference in performance between memory-mapping a file and doing discrete IO operations isn't all that much anyway. It's really hard to beat the simplicity of accessing a file as if it's in memory. Memory mapping files has a huge advantage over other forms of IO: code simplicity. Since the memory-mapped file is handled internally in pages, linear file access (as seen, for example, in flat file data storage or configuration files) requires disk access only when a new page boundary is crossed, and can write larger sections of the file to disk in a single operation. Port mapped IO use the same address bus with dedicated address space. One huge advantage of memory-mapped files. Memory-Mapped I/O:- Memory-mapped I/O (not to be confused with memory-mapped file I/O) uses the same address bus to address both memory and I/O devices the.

For example Intel 86bit processors use IN and OUT commands to Read and Write data respectively. However, the Linux memory-mapped I/O path suffers from several scalability limitations. In memory mapped I/O, I/O devices are mapped into the system directly with RAM and ROM. Memory-mapped I/O provides several potential advantages over explicit read/write I/O, especially for low latency devices: (1) It does not require a system call, (2) it incurs almost zero overhead for data in memory (I/O cache hits), and (3) it removes copies between kernel and user space.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed